【 使用环境 】生产环境

INSERT/tuya_aimemory_prod.__idx_500583_idx_1764334847108061_index_snapshot_data_table PARTITION(p0, p3)(__key_20_1748264415604471,id,__vid_1748264415602220,__vector_20_1748264415602775,__data_20_1748264415604809 + index(ai_doc primary) ob_ddl_schema_version(ai_doc, 1764334848743784) */__key_20_1748264415604471 AS __key_20_1748264415604471,id AS id,__vid_1748264415602220 AS __vid_1748264415602220,__vector_20_1748264415602775 AS __vector_20_1748264415602775,__data_20_1748264415604809 AS __data_20_1748264415604809tuya_aimemory_prod.ai_doc PARTITION(p0, p3) as of snapshot 1764334848906957000

2 个赞

辞霜

2025 年12 月 1 日 14:42

#3

使用的是向量么(全量刷新)

1 个赞

是用到向量了。这是每个向量表、每天都会有这个操作吗,这个表已经蛮久了,那应该每天都有这操作的,可之前没怎么观察到,有文档介绍吗

1 个赞

辞霜

2025 年12 月 1 日 18:03

#5

每天都会操作。可能是数据库压力导致该sql执行时间较长导致

1 个赞

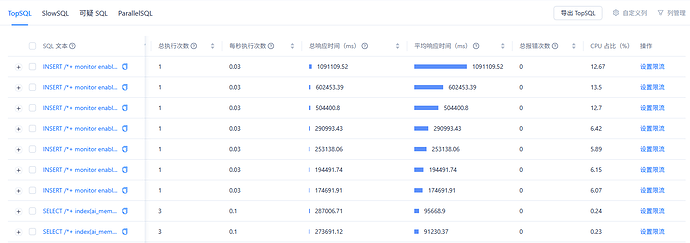

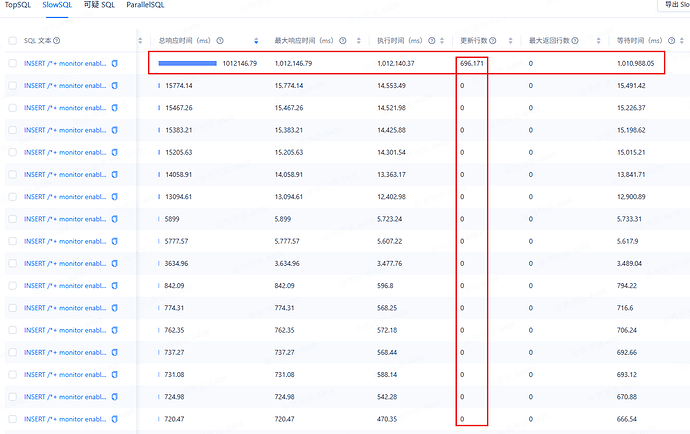

你好,今天又出现这问题了,跟其它这类 sql 对比了,这条 sql 执行时更新了大量数据,其它 sql 没有,执行时间就很长,资源方面没看到有啥瓶颈,有其它排查方向吗

1 个赞

辞霜

2025 年12 月 15 日 15:43

#7

麻烦发一份sql文本。这条sql看着是等待时间长导致的 可能是在等锁。点进去看一下执行计划执行耗时在哪里了

1 个赞

INSERT/tuya_aimemory_prod.__idx_520618_idx_1765767439285897_index_snapshot_data_table (__key_23_1760929026937846,id,gmt_create,__vid_1760929026935011,__vector_23_1760929026935543,__data_23_1760929026938118 + index(rag_knowledge_document_slice primary) ob_ddl_schema_version(rag_knowledge_document_slice, 1765767439926040) */__key_23_1760929026937846 AS __key_23_1760929026937846,id AS id,gmt_create AS gmt_create,__vid_1760929026935011 AS __vid_1760929026935011,__vector_23_1760929026935543 AS __vector_23_1760929026935543,__data_23_1760929026938118 AS __data_23_1760929026938118tuya_aimemory_prod.rag_knowledge_document_slice as of snapshot 1765767440033323000

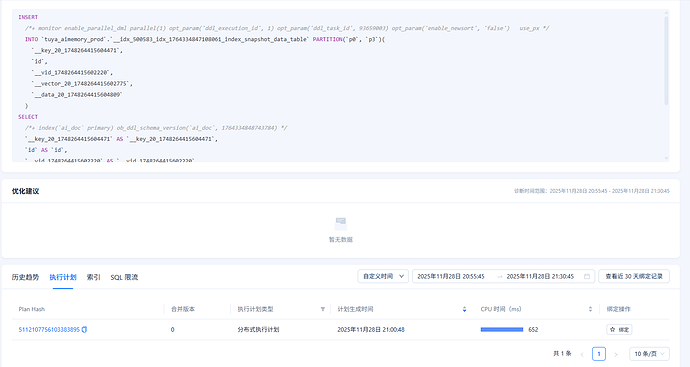

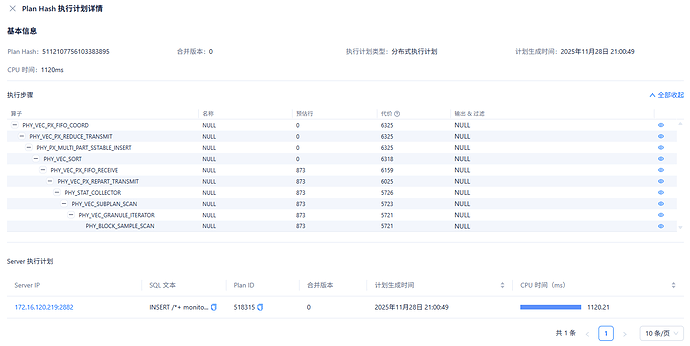

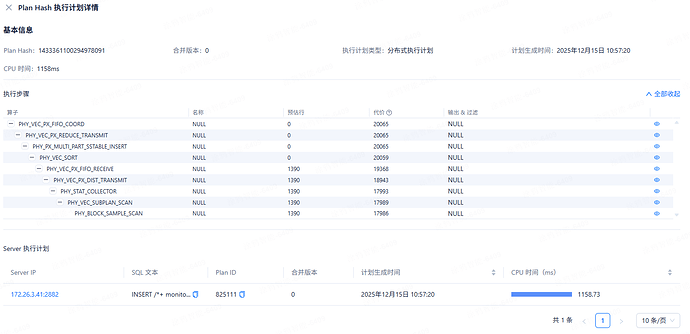

这是执行计划及 sql

1 个赞

辞霜

2025 年12 月 15 日 16:14

#9

这里看实际执行时间1158ms,几乎都是在等待执行

1 个赞

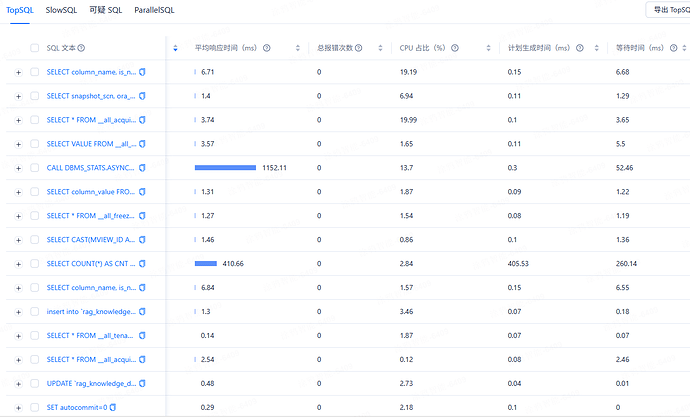

等待这个状态就是在干等吗,为什么处于等待状态呢,看了下 top sql,好些都是这类的,几乎都在等待,如何排查下呢

1 个赞

辞霜

2025 年12 月 15 日 18:06

#11

select * from gv$ob_sql_audit where sql_id=‘xxxxx’;

你好,随便拿了两条 sql,麻烦给看下呢

*************************** 38. row ***************************/INTO tuya_aimemory_prod.__idx_500612_idx_1765766796854809_index_id_table PARTITION(M202506,M202509,P202512,P202603)(__scn_26_1753843499651391, __vid_1753843499647861, __type_26_1753843499648191, biz_time, __vector_26_1753843499648596) SELECT / + index(ai_memory_alphago_memory_fact primary) ob_ddl_schema_version(ai_memory_alphago_memory_fact, 1765766797204232) / __scn_26_1753843499651391 AS __scn_26_1753843499651391, __vid_1753843499647861 AS __vid_1753843499647861, __type_26_1753843499648191 AS __type_26_1753843499648191, biz_time AS biz_time, __vector_26_1753843499648596 AS __vector_26_1753843499648596 from tuya_aimemory_prod.ai_memory_alphago_memory_fact PARTITION(M202506,M202509,P202512,P202603) as of snapshot 1765766797402245001 order by 1, 2, 3 + monitor enable_parallel_dml parallel(1) opt_param(‘ddl_execution_id’, 2) opt_param(‘ddl_task_id’, 59522294) opt_param(‘enable_newsort’, ‘false’) use_px /INTO tuya_aimemory_prod.__idx_500612_idx_1765766796854809 PARTITION(M202506,M202509,P202512,P202603)(__vid_1753843499647861, __type_26_1753843499648191, biz_time, __vector_26_1753843499648596) SELECT / + index(ai_memory_alphago_memory_fact primary) ob_ddl_schema_version(ai_memory_alphago_memory_fact, 1765766797204232) */ __vid_1753843499647861 AS __vid_1753843499647861, __type_26_1753843499648191 AS __type_26_1753843499648191, biz_time AS biz_time, __vector_26_1753843499648596 AS __vector_26_1753843499648596 from tuya_aimemory_prod.ai_memory_alphago_memory_fact PARTITION(M202506,M202509,P202512,P202603) as of snapshot 1765766797235640000 order by 1, 2

辞霜

2025 年12 月 16 日 10:05

#13

这条sql是属于向量维护索引操作,dml操作比较多情况下系统会维护索引这个无法进行优化,建议升级一下集群,435bp2有不少bug问题。且新版本对维护索引操作进行过优化。

这个维护操作能指定维护窗口吗,现在随机出现没法控制。升级的话听话到哪个版本时再往上升就只能迁移数据了,低版本的话反而可以直接升级,所以暂时还没升级的想法

1 个赞

淇铭

2025 年12 月 16 日 10:20

#15

全量刷新每 24 小时会检查一次,如果新增数据超过原有数据的 20%,则自动执行全量刷新。全量刷新会在后台异步执行,首先创建新的索引,然后替换旧索引。在重建过程中,旧索引保持可用状态,但整体过程相对较慢。https://www.oceanbase.com/docs/common-oceanbase-database-cn-1000000002012936