【 使用环境 】生产环境

【 OB or 其他组件 】OB

【 使用版本 】

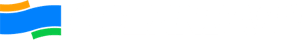

【问题描述】oceanbase安装卡在obshell bootstrap

上面告警提醒了 内存不够需要234G 仅剩空余内存153G

内存释放了,重新安装也不行:

麻烦提供一份yaml文件

user:

username: oceanbase

password: 12345678

port: 22

oceanbase-ce:

version: 4.4.1.0

release: 100000032025101610.el8

package_hash: 1309bc20bff8d9e64d19e9cf7433798a7c696452

192.168.37.142:

zone: zone1

datafile_maxsize: 30T

192.168.37.143:

zone: zone1

datafile_maxsize: 30T

192.168.37.144:

zone: zone1

datafile_maxsize: 30T

192.168.37.145:

zone: zone1

datafile_maxsize: 30T

192.168.37.146:

zone: zone1

datafile_maxsize: 30T

192.168.37.147:

zone: zone1

datafile_maxsize: 30T

192.168.37.148:

zone: zone1

datafile_maxsize: 30T

192.168.37.149:

zone: zone1

datafile_maxsize: 30T

192.168.37.150:

zone: zone1

datafile_maxsize: 30T

192.168.37.151:

zone: zone1

datafile_maxsize: 30T

192.168.37.152:

zone: zone1

datafile_maxsize: 30T

192.168.37.153:

zone: zone2

datafile_maxsize: 30T

192.168.37.154:

zone: zone2

datafile_maxsize: 30T

192.168.37.155:

zone: zone2

datafile_maxsize: 30T

192.168.37.156:

zone: zone2

datafile_maxsize: 30T

192.168.37.157:

zone: zone2

datafile_maxsize: 30T

192.168.37.158:

zone: zone2

datafile_maxsize: 30T

192.168.37.159:

zone: zone2

datafile_maxsize: 30T

192.168.37.160:

zone: zone2

datafile_maxsize: 30T

192.168.37.161:

zone: zone2

datafile_maxsize: 30T

192.168.37.162:

zone: zone2

datafile_maxsize: 30T

192.168.37.163:

zone: zone2

datafile_maxsize: 30T

192.168.37.164:

zone: zone3

datafile_maxsize: 30T

192.168.37.165:

zone: zone3

datafile_maxsize: 30T

192.168.37.166:

zone: zone3

datafile_maxsize: 30T

192.168.37.167:

zone: zone3

datafile_maxsize: 30T

192.168.37.168:

zone: zone3

datafile_maxsize: 30T

192.168.37.169:

zone: zone3

datafile_maxsize: 30T

192.168.37.170:

zone: zone3

datafile_maxsize: 30T

192.168.37.171:

zone: zone3

datafile_maxsize: 30T

192.168.37.172:

zone: zone3

datafile_maxsize: 30T

192.168.37.173:

zone: zone3

datafile_maxsize: 30T

192.168.37.174:

zone: zone3

datafile_maxsize: 31T

servers:

- 192.168.37.142

- 192.168.37.153

- 192.168.37.164

- 192.168.37.143

- 192.168.37.144

- 192.168.37.145

- 192.168.37.146

- 192.168.37.147

- 192.168.37.148

- 192.168.37.149

- 192.168.37.150

- 192.168.37.151

- 192.168.37.152

- 192.168.37.154

- 192.168.37.155

- 192.168.37.156

- 192.168.37.157

- 192.168.37.158

- 192.168.37.159

- 192.168.37.160

- 192.168.37.161

- 192.168.37.162

- 192.168.37.163

- 192.168.37.165

- 192.168.37.166

- 192.168.37.167

- 192.168.37.168

- 192.168.37.169

- 192.168.37.170

- 192.168.37.171

- 192.168.37.172

- 192.168.37.173

- 192.168.37.174

global:

appname: oceanbase_olap

root_password: Oceanbase3411@

mysql_port: 2881

rpc_port: 2882

data_dir: /data01/oceanbase/data

redo_dir: /data02/oceanbase/log

obshell_port: 2886

home_path: /home/oceanbase/oceanbase_olap/oceanbase

scenario: htap

cluster_id: 1764039277

ocp_agent_monitor_password: 7P3nJtvqMN

proxyro_password: 5xZe60D9qs

enable_syslog_wf: false

max_syslog_file_count: 16

memory_limit: 361G

datafile_size: 1T

system_memory: 29G

log_disk_size: 1T

cpu_count: 126

datafile_next: 3T

depends: - ob-configserver

obproxy-ce:

version: 4.3.5.0

package_hash: 73d0e9b4cecb53c8ba91f3ef50ac6742baa8be57

release: 3.el8

servers: - 192.168.37.175

global:

prometheus_listen_port: 2884

listen_port: 2883

rpc_listen_port: 2885

home_path: /home/oceanbase/oceanbase_olap/obproxy

obproxy_sys_password: (o~DG(el_|f)lyj

skip_proxy_sys_private_check: true

enable_strict_kernel_release: false

enable_cluster_checkout: false

192.168.37.175:

proxy_id: 3911

client_session_id_version: 2

depends: - oceanbase-ce

- ob-configserver

obagent:

version: 4.2.2

package_hash: bf152b880953c2043ddaf80d6180cf22bb8c8ac2

release: 100000042024011120.el8

servers: - 192.168.37.142

- 192.168.37.143

- 192.168.37.144

- 192.168.37.145

- 192.168.37.146

- 192.168.37.147

- 192.168.37.148

- 192.168.37.149

- 192.168.37.150

- 192.168.37.151

- 192.168.37.152

- 192.168.37.153

- 192.168.37.154

- 192.168.37.155

- 192.168.37.156

- 192.168.37.157

- 192.168.37.158

- 192.168.37.159

- 192.168.37.160

- 192.168.37.161

- 192.168.37.162

- 192.168.37.163

- 192.168.37.164

- 192.168.37.165

- 192.168.37.166

- 192.168.37.167

- 192.168.37.168

- 192.168.37.169

- 192.168.37.170

- 192.168.37.171

- 192.168.37.172

- 192.168.37.173

- 192.168.37.174

global:

monagent_http_port: 8088

mgragent_http_port: 8089

home_path: /home/oceanbase/oceanbase_olap/obagent

http_basic_auth_password: K2kO7tkO1

ob_monitor_status: active

depends: - oceanbase-ce

ob-configserver:

version: 1.0.0

release: 2.el8

servers: - 192.168.37.141

global:

listen_port: 8080

home_path: /home/oceanbase/oceanbase_olap/obconfigserver

prometheus:

version: 2.37.1

release: 10000102022110211.el8

servers: - 192.168.37.141

global:

port: 9090

basic_auth_users:

admin: Oceanbase3411@#

home_path: /home/oceanbase/oceanbase_olap/prometheus

depends: - obagent

grafana:

version: 7.5.17

release: ‘1’

servers: - 192.168.37.141

global:

port: 3000

login_password: Oceanbase3411@#

home_path: /home/oceanbase/oceanbase_olap/grafana

depends: - prometheus

alertmanager:

version: 0.28.1

release: 32025073111.el8

servers: - 192.168.37.142

global:

receivers:- mock_webhook

port: 9093

basic_auth_users:

admin: oclz+P6pH

home_path: /home/oceanbase/oceanbase_olap/alertmanager

mock_webhook:

receiver_type: webhook

url: http://127.0.0.1:5001/

depends:

- mock_webhook

- prometheus

目前还是卡在obhsell bootstrap那一步么

你那有ocp么,可以使用ocp部署ob。这么大规模大集群obd应该没测过

或者提供一下obshell的日志。observer的log目录里有个log_obshell目录,里面的就是 obshell 的日志

我们这边是私有云,自己的机房,没办法用ocp吧?

这个日志不在obd安装的机器吧?没找到呢

在observer节点上。 可以安装ocp。

我看你集群规模很大,推荐使用ocp部署集群。下载个ocp-all-in-one包,规划台机器使用obd web白屏化部署一台ocp

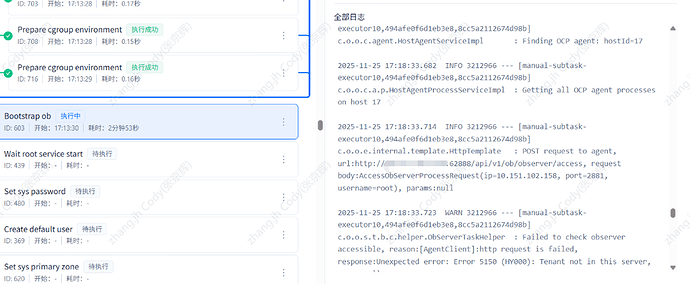

看了一台log_obshell日志,有类似日志:

[GIN-debug] GET /rpc/v1/maintainer --> github.com/oceanbase/obshell/agent/rpc.getMaintainerHandler (12 handlers)

[GIN-debug] POST /rpc/v1/maintainer/update --> github.com/oceanbase/obshell/agent/rpc.updateAllAgentsHandler (12 handlers)

2025/11/25 14:20:58 /home/jenkins/agent/workspace/ob_artifacte_local_artifact/ob_source_code_dir/agent/secure/service.go:110 record not found

[0.059ms] [rows:0] SELECT `value` FROM `ocs_info` WHERE name="agentRootPwd" ORDER BY `ocs_info`.`name` LIMIT 1

2025/11/25 14:20:58 /home/jenkins/agent/workspace/ob_artifacte_local_artifact/ob_source_code_dir/agent/repository/db/oceanbase/builder.go:78

[error] failed to initialize database, got error Error 1049 (42000): Unknown database 'ocs'

[GIN] 2025/11/25 - 14:22:57 | 200 | 351.981783ms | 10.151.102.141 | GET "/api/v1/info"

[GIN] 2025/11/25 - 14:22:58 | 200 | 342.173966ms | 10.151.102.141 | GET "/api/v1/info"

任务日志麻烦提供一下看看

可以了,bootstrap这一步时间有点久,多谢。

bootstrap这一步时间随着节点数成正比,麻烦能告知一下你是哪个公司的么