【 使用环境 】测试环境

【 OB or 其他组件 】

【 使用版本 】

【问题描述】清晰明确描述问题

【复现路径】问题出现前后相关操作

【问题现象及影响】

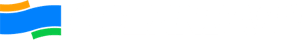

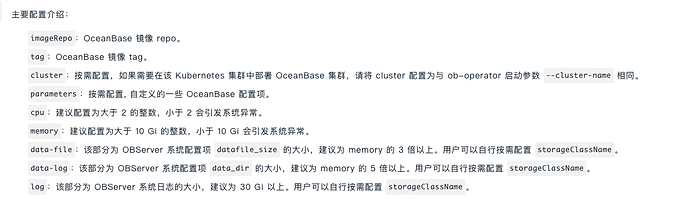

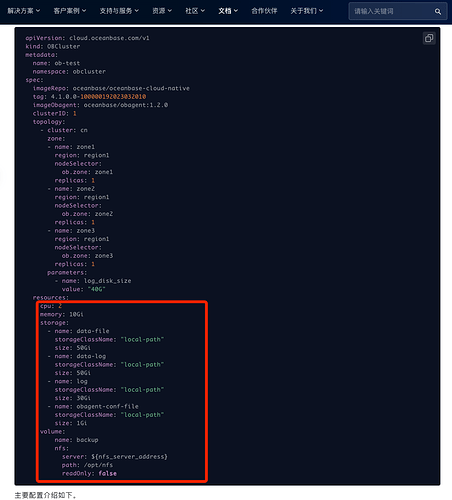

在k8s测试不是OB4.0失败,参考文档https://www.oceanbase.com/docs/community-observer-cn-10000000000901210

【附件】

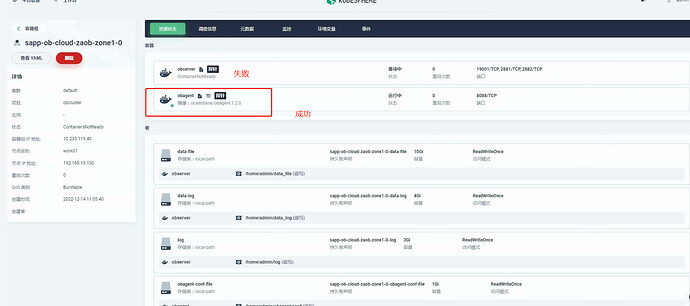

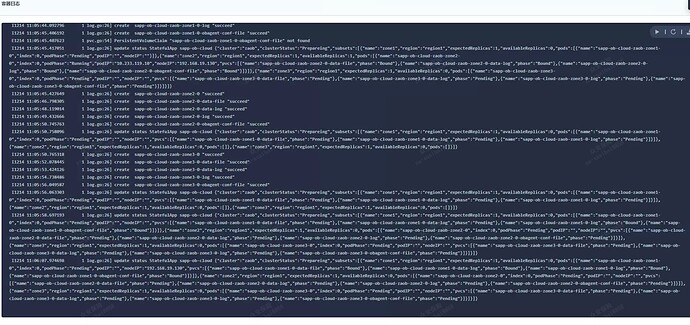

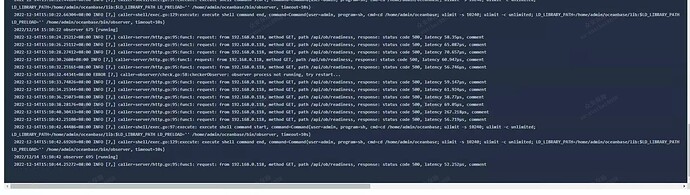

查看容器日志

agent日志不存在网络问题

observer日志,报网络异常,caller=server/http.go:95:func1 不清楚这个是什么

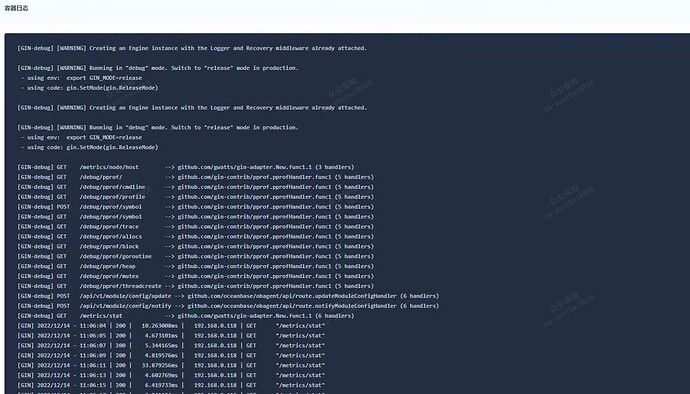

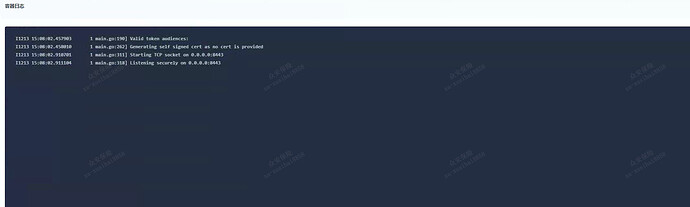

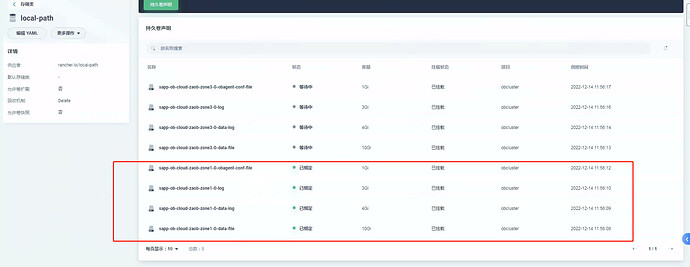

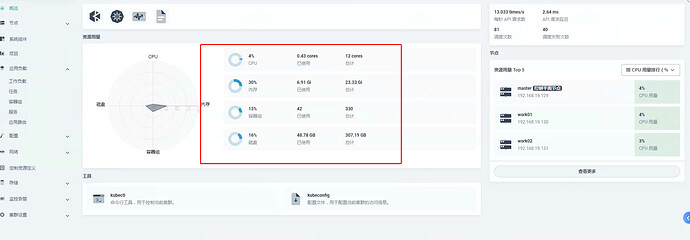

下面是管理节点正常的截图

管理manager的容器日志

rbac-proxy的日志

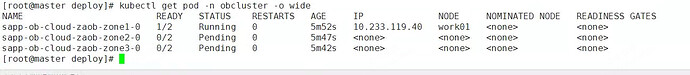

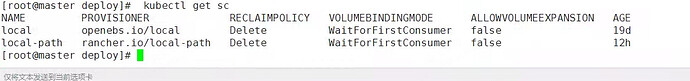

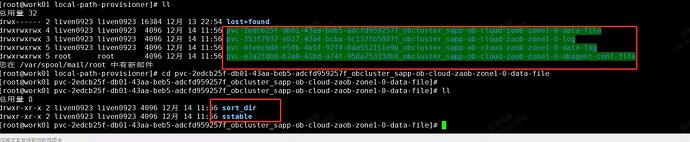

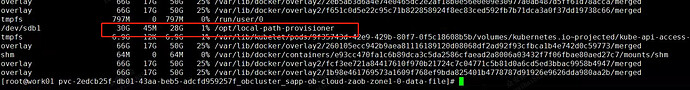

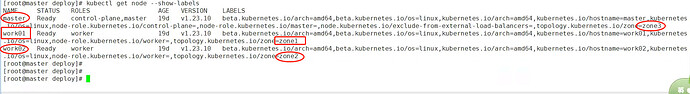

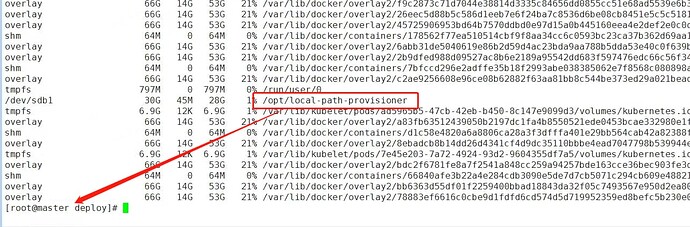

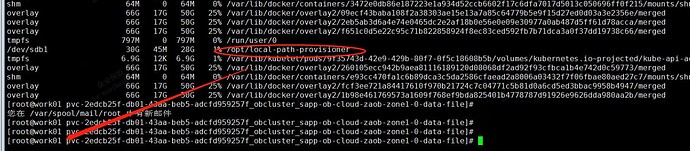

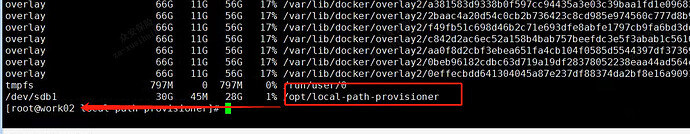

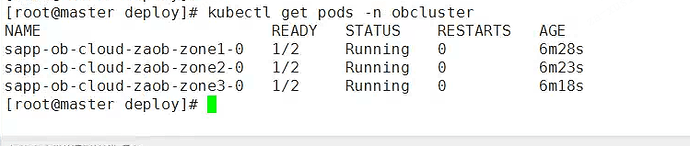

在命令行的截图

[root@master deploy]# kubectl describe pod sapp-ob-cloud-zaob-zone1-0 -n obcluster

Name: sapp-ob-cloud-zaob-zone1-0

Namespace: obcluster

Priority: 0

Node: work01/192.168.19.130

Start Time: Wed, 14 Dec 2022 11:05:56 +0800

Labels: app=sapp-ob-cloud

index=0

subset=zone1

Annotations: cni.projectcalico.org/containerID: ae04f926c236f1a7592d6d2c589d581e181a4aa73ed68edd0cfdf25238a864c1

cni.projectcalico.org/podIP: 10.233.119.40/32

cni.projectcalico.org/podIPs: 10.233.119.40/32

Status: Running

IP: 10.233.119.40

IPs:

IP: 10.233.119.40

Containers:

observer:

Container ID: docker://e7d1674df1cb86247cc23a4cd550c0e426094f5bb493c3e2c101176f13bdb1f2

Image: oceanbasedev/oceanbase-cn:v3.1.4-10000092022071511-snapshot-08172042

Image ID: docker-pullable://oceanbasedev/oceanbase-cn@sha256:f20aa5c81c6dbed4fd4fa05a3e154eb7a45348352d125f504d147ae7a7e97529

Ports: 19001/TCP, 2881/TCP, 2882/TCP

Host Ports: 0/TCP, 0/TCP, 0/TCP

State: Running

Started: Wed, 14 Dec 2022 11:06:00 +0800

Ready: False

Restart Count: 0

Limits:

cpu: 2

memory: 4Gi

Requests:

cpu: 2

memory: 4Gi

Readiness: http-get http://:19001/api/ob/readiness delay=0s timeout=1s period=2s #success=1 #failure=3

Environment:

Mounts:

/home/admin/data_file from data-file (rw)

/home/admin/data_log from data-log (rw)

/home/admin/log from log (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-6gx6l (ro)

obagent:

Container ID: docker://26a8143ea3aa0141ac1cbc7f319da5726d807d4ee6ae5660b02cff2e8fe282f8

Image: oceanbase/obagent:1.2.0

Image ID: docker-pullable://oceanbase/obagent@sha256:ac37a475b3c8ac88ed80f231adc8b079eda8c112dd23f5ec5b1056b83dada025

Port: 8088/TCP

Host Port: 0/TCP

State: Running

Started: Wed, 14 Dec 2022 11:06:03 +0800

Ready: True

Restart Count: 0

Readiness: http-get http://:8088/metrics/stat delay=0s timeout=1s period=2s #success=1 #failure=3

Environment:

Mounts:

/home/admin/obagent/conf from obagent-conf-file (rw)

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-6gx6l (ro)

Conditions:

Type Status

Initialized True

Ready False

ContainersReady False

PodScheduled True

Volumes:

data-file:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: sapp-ob-cloud-zaob-zone1-0-data-file

ReadOnly: false

data-log:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: sapp-ob-cloud-zaob-zone1-0-data-log

ReadOnly: false

log:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: sapp-ob-cloud-zaob-zone1-0-log

ReadOnly: false

obagent-conf-file:

Type: PersistentVolumeClaim (a reference to a PersistentVolumeClaim in the same namespace)

ClaimName: sapp-ob-cloud-zaob-zone1-0-obagent-conf-file

ReadOnly: false

kube-api-access-6gx6l:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional:

DownwardAPI: true

QoS Class: Burstable

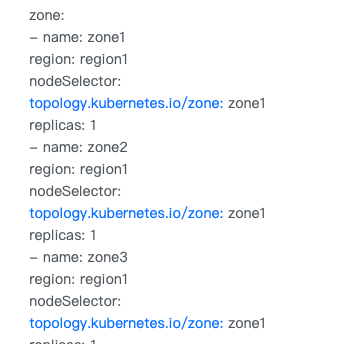

Node-Selectors: topology.kubernetes.io/zone=zone1

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

Warning FailedScheduling 6m51s default-scheduler 0/3 nodes are available: 3 persistentvolumeclaim “sapp-ob-cloud-zaob-zone1-0-data-file” not found.

Warning FailedScheduling 6m50s default-scheduler 0/3 nodes are available: 3 persistentvolumeclaim “sapp-ob-cloud-zaob-zone1-0-data-log” not found.

Warning FailedScheduling 6m47s default-scheduler 0/3 nodes are available: 3 persistentvolumeclaim “sapp-ob-cloud-zaob-zone1-0-log” not found.

Normal Scheduled 6m34s default-scheduler Successfully assigned obcluster/sapp-ob-cloud-zaob-zone1-0 to work01

Normal CreatedPod 6m51s statefulapp-controller create Podsapp-ob-cloud-zaob-zone1-0

Normal Pulling 6m33s kubelet Pulling image “oceanbasedev/oceanbase-cn:v3.1.4-10000092022071511-snapshot-08172042”

Normal Pulling 6m31s kubelet Pulling image “oceanbase/obagent:1.2.0”

Normal Pulled 6m31s kubelet Successfully pulled image “oceanbasedev/oceanbase-cn:v3.1.4-10000092022071511-snapshot-08172042” in 2.360159039s

Normal Started 6m31s kubelet Started container observer

Normal Created 6m31s kubelet Created container observer

Normal Pulled 6m28s kubelet Successfully pulled image “oceanbase/obagent:1.2.0” in 2.387843357s

Normal Created 6m28s kubelet Created container obagent

Normal Started 6m28s kubelet Started container obagent

Warning Unhealthy 6m28s kubelet Readiness probe failed: Get “http://10.233.119.40:8088/metrics/stat”: dial tcp 10.233.119.40:8088: connect: connection refused

Warning Unhealthy 92s (x156 over 6m28s) kubelet Readiness probe failed: HTTP probe failed with statuscode: 500

[root@master deploy]#

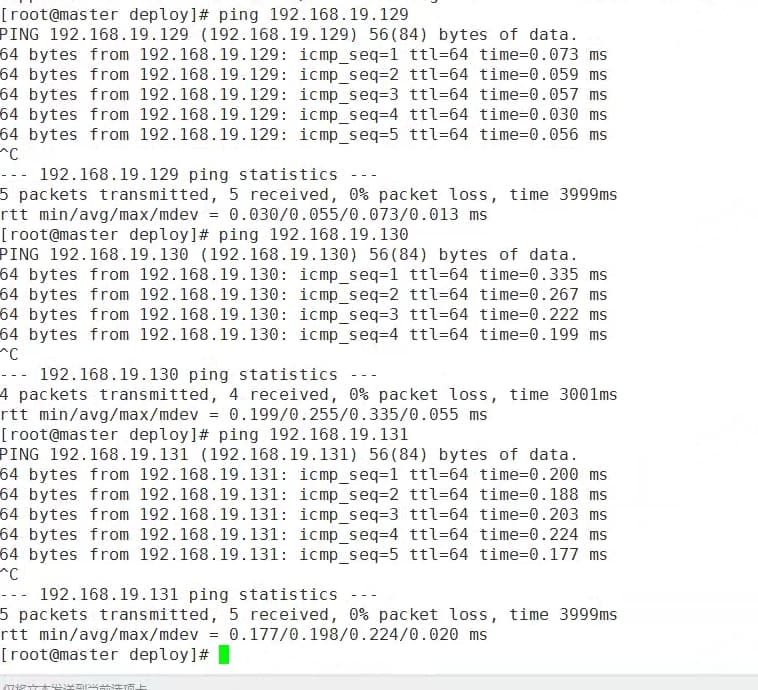

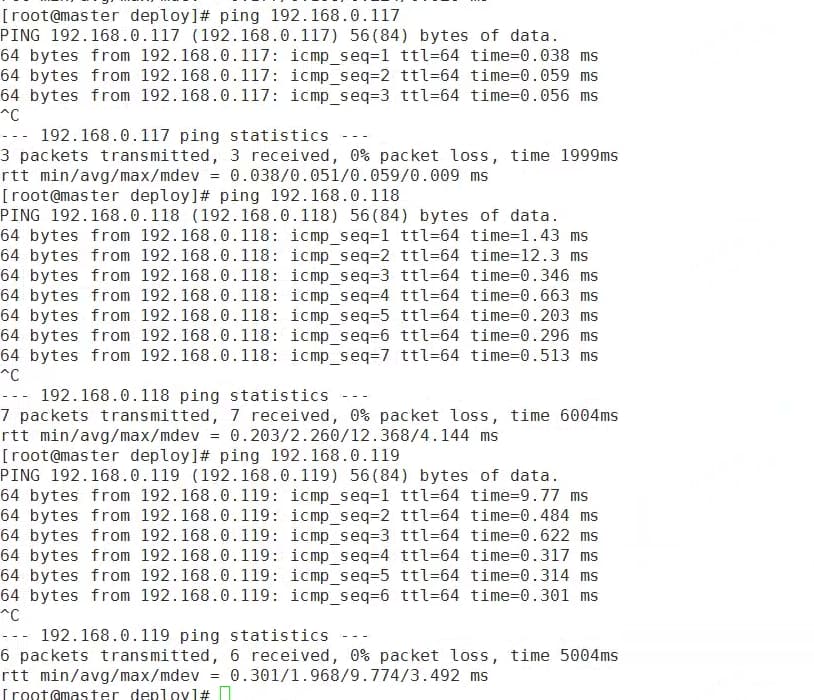

相关的ping截图

私网

公网网卡也不存在延时(observer之间不存在网络延时)